AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Webscraper plus12/22/2023 I'll be glad to help with no obligations or pressure until you are comfortable. Free to use in the browser, plus free credits for automated scraping. Please feel free to inquire about my services. This is EVERYTHING I have been looking for in a web scraper. Try to describe your needs as much as possible (exact site URL + the fields you need).

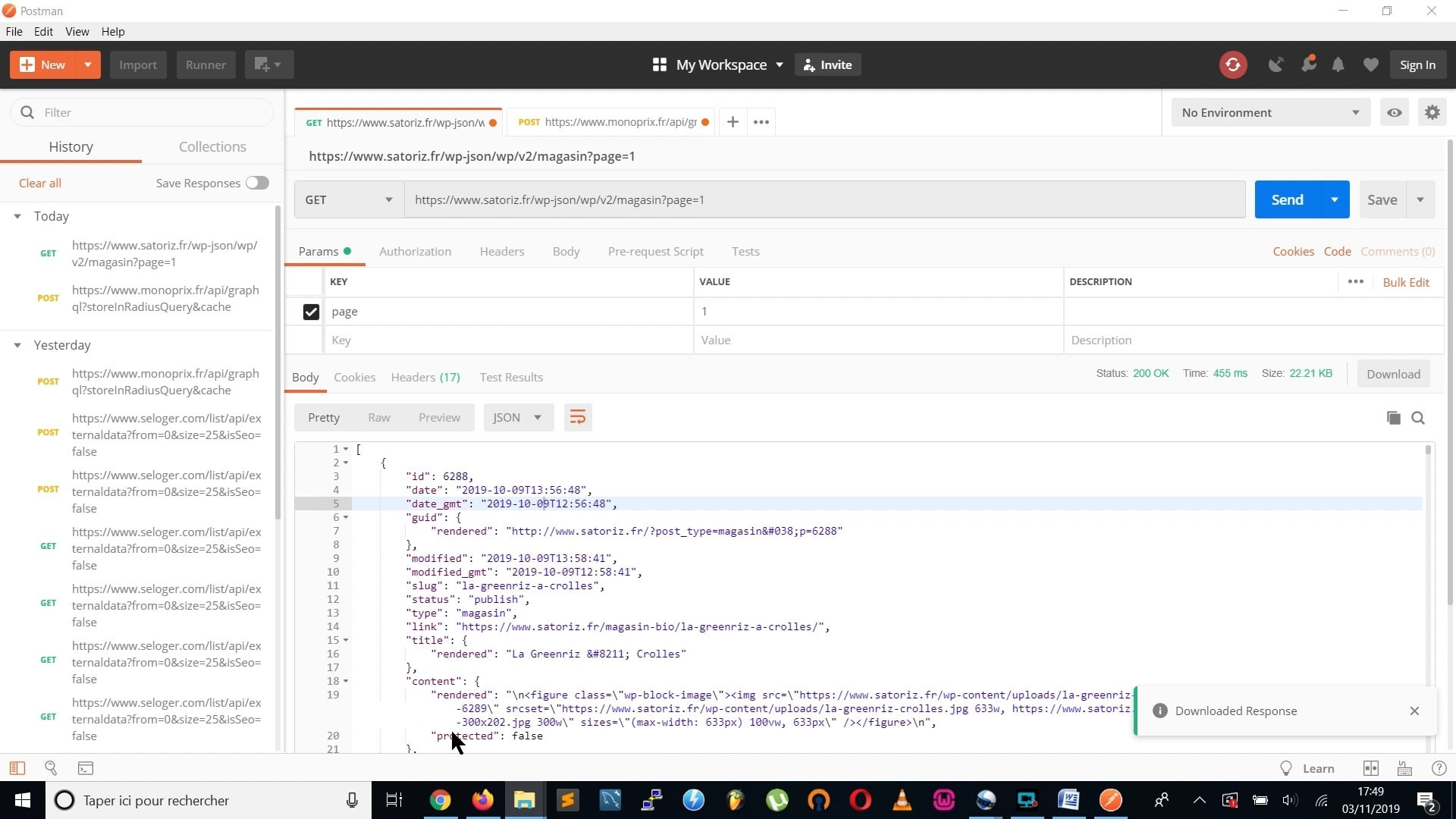

You can expect fast and accurate results. Web Scraper Plus+: If youre lucky enough to be scraping pages that have an incrementing ID in the URL. wmv: Associated Files: Basic Counter Page. Optimizing performance for fast scraping These are what gives Web Scraper such flexibility. RAM management for very large output files List of some of the technical problems I can easily solve. Use Web Scraper Cloud to export data in CSV, XLSX and JSON formats, access it via API, webhooks or get it exported via Dropbox, Google Sheets or Amazon S3. Libraries: Selenium, Scrapy, Requests, Regex, BeautifulSoup4, Multiprocessing, Pandas Build scrapers, scrape sites and export data in CSV format directly from your browser. Languages: Python, PHP, JAVA, MySQL, HTML I'm also using proxies to avoid detection and blocking from the server. I'm using Python technologies that can request thousands of URLs at a time that maximize the speed as much as possible. To get the most within the shortest period of time I am running several scrape scripts with multiple threads running on it. It uses modified regEx to quickly write complex patterns to parse data out of a website. I have scraped over 1000 websites till now which includes 100's of small and large Business directories, e-commerce websites. A simple to set up web scraper written in Java. 20 reviews for Floors Plus Carpet Cleaner, Carpet Sales & Install in Lockhart, TX Floors Plus.

I have already scraped millions of records out of dozens of websites. I am creating automated data extraction systems for more than six years now. I am a full-time freelancer and do Web Scraping, Web Crawler, Data Scraping, Web Automation, Django, Data Mining, Custom bot development, Building Business Lists, Email list generating, Lead Generation, Data Extraction, Data entry and many more. I completed over 430+ projects till now and numbers are growing everyday. I have over 6 years of experience in extracting data and I have a long history of successfully completed projects and positive feedback. Hello, I'm one of the TOP RATED PLUS and VERIFIED Web scraping, Building Business List, Data Extraction, and Web automation contractors on Upwork for good reasons. * If you like my gig then Please bookmark my GIG or add it as a favorite on Upwork, finding me next time will be much easier! * I'm not extracting personal contact information * Please contact me before placing an order! The scraped dataset in different formats like EXCEL, CSV, JSON, DATABASE, etc. I can use PhantomJS, Scrapy, Beautiful-soup or Selenium as per your requirements. Each column maps to one of the controls on the form. Mais les CAPTCHA sont assez irritants, non. Edit what Web Scraper sends to web forms directly from a spreadsheet. NuCaptcha, hCaptcha sont dautres solutions CAPTCHA avancées.

reCaptcha v3 est une solution dintégration CAPTCHA de Google pour détecter le trafic de robots sur les sites Web. I write script mostly in Python but I can also provide script in PHP and Java. Les CAPTCHA sont lune des techniques anti-scraping les plus populaires mises en uvre par les gestionnaires de sites Web. I had developed hundreds of bots till now and still, numbers are increasing. I can develop web data scraper / bot / scripts / extractor for you. Web scraping tools automate web-based data collection. Have you tired with Manual Data Collection and want to AUTOMATE the boring stuff? So you are in the right place. You can find the source code for this tutorial here.Welcome to my Web Scraper/Bot Development GIG:) Pretty awesome, right! Plus, all the code is typesafe! We then parse this information from the HTML we recieve from the webpage, and create an array of objects with that data.Īfter saving your code, you should see an array being printed in your console with the information on each player. We created an interface, PlayerData that represents the structure of the data we're parsing. create () // Create a new Axios Instance // Send an async HTTP Get request to the url AxiosInstance. index.ts import axios from ' axios ' const url = ' ' // URL we're scraping const AxiosInstance = axios.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed